Selected Work

✣HOJB — Research · Product

Lie to Me

"A behavioral analysis system that reads faces, voices, bodies, and words — and caught Lance Armstrong on the first try."

Trained from scratch on the Real-Life Trial Dataset — 121 labeled courtroom testimony clips. Four extractors run in parallel: DeepFace reads facial emotion, librosa extracts pitch and MFCCs, MediaPipe tracks gaze aversion and postural shifts, and Whisper transcribes speech for linguistic pattern analysis.

A Random Forest fusion model learns which combinations of signals predict deception. AUC 0.94 on held-out test data — beating the published baseline of 0.77–0.82 from the original paper. Deployed as a live web app on HuggingFace Spaces.

Out-of-Domain Validation

Tested on Lance Armstrong's doping denial interview — the model flagged it as deceptive via low first-person pronoun use, consistent with Pennebaker's psychological distancing research. The model had never seen footage outside a courtroom.

AUC-ROC — Real Data

0.94

vs published baseline of 0.77–0.82

Top Signal

emo_surprise

Hardest emotion to consciously fake

Training Data

121

Real-Life Trial Dataset courtroom clips

Modality Breakdown — Lance Armstrong

✣HOJB — Personal Project

Wordle.AI

"Two AIs walk into a Wordle. One studied the answers. One figured it out alone."

Live Model Toggle

Built two Wordle solvers using different learning paradigms — one trained on information-theoretically optimal games, one that learned purely from winning and losing. Deployed both behind a single API with a live toggle so you can watch them think differently in real time.

Benchmarked across 2,315 words. The SL model achieves a perfect win rate at 3.46 avg guesses. The RL model reaches 98.2% — trained without seeing a single optimal solution.

Emergent Result

The RL model independently converged to opening with CRANE — the same first guess as the supervised model — without ever being told it was good. It discovered information-optimal play through trial and error alone.

Win Rate — SL Model

100%

All 2,315 Wordle words solved

Win Rate — RL Model

98.2%

No optimal training data used

Opening Word (both models)

CRANE

Emergent — RL converged here independently

✣HOJB — Research

Swahili Emotion Model

"Fine-tuning AfroLM to understand emotion in a language the internet underserves."

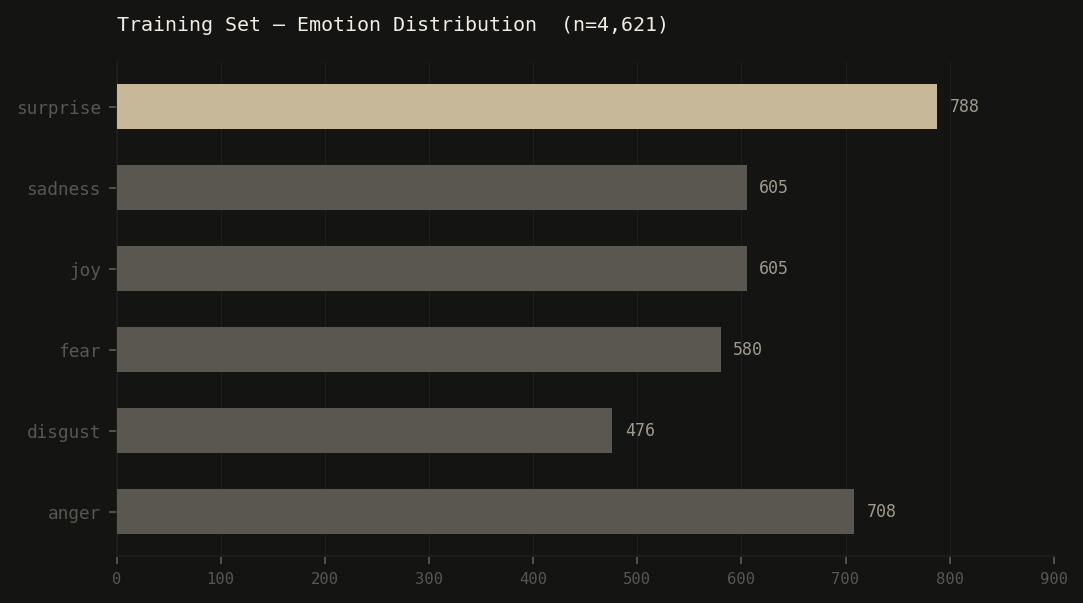

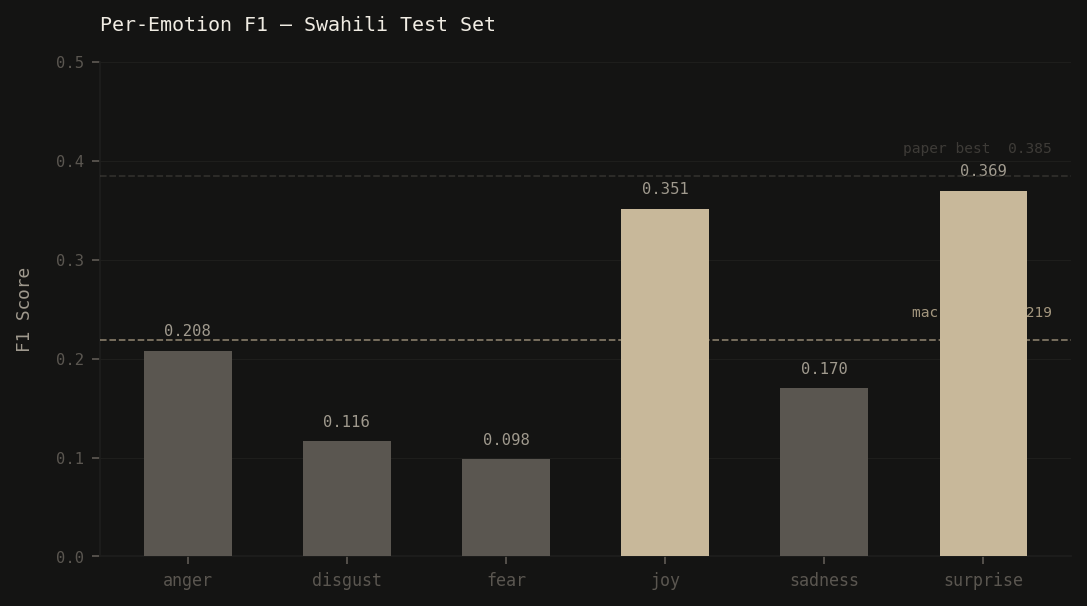

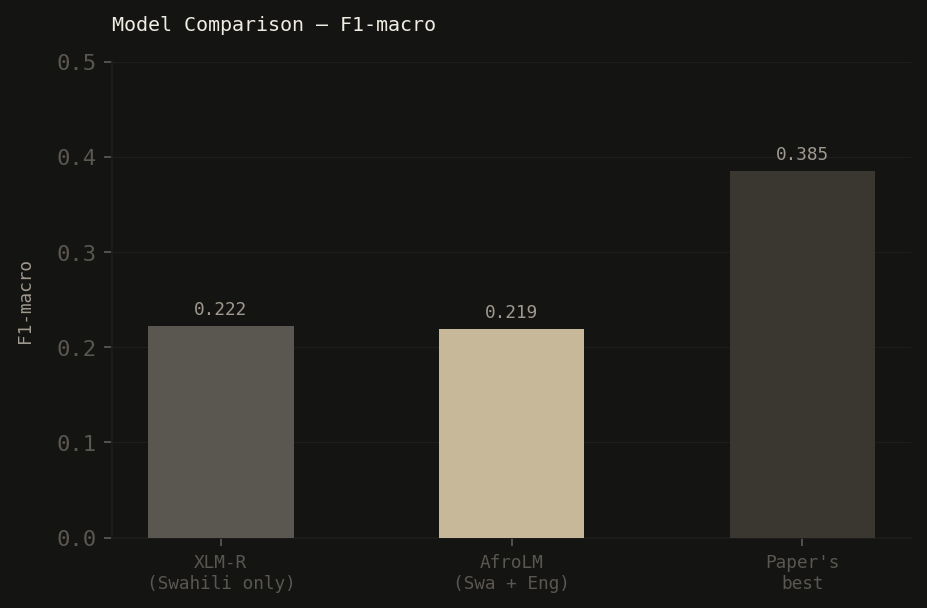

Fine-tuned AfroLM for multi-label emotion classification in Swahili, trained on the BRIGHTER dataset (3,307 examples) augmented with cross-lingual English data for a combined 4,621 training samples across 6 emotion classes.

Part of a broader interest in applying ML to problems that matter outside the English-speaking world. The model identifies anger, disgust, fear, joy, sadness, and surprise from Swahili text.

Cross-lingual Training

Combining Swahili with English data via AfroLM's multilingual backbone improved macro F1 from 0.188 to 0.219 — demonstrating that cross-lingual transfer helps even when target-language data exists.

Training Data

4,621

Swahili + English combined

F1-macro

0.219

vs paper best of 0.385

Base Model

AfroLM

bonadossou/afrolm_active_learning

✣HOJB — Research · Systems

OMEGA

"A Transformer that watches crypto, stocks, and forex every hour — and only speaks when it's confident enough to act."

| Pair | Action | ↑ UP | ↓ DN | Conf | Thr | Kelly | Hi-Conf Acc |

|---|---|---|---|---|---|---|---|

| USD/JPY | 🔴 SELL | 47.4% | 52.6% | 52.6% | 50.0% | 2.6% | 68.0% |

| USD/CHF | 🟢 BUY | 56.1% | 43.9% | 56.1% | 54.0% | 6.1% | 61.4% |

| EUR/USD | — HOLD | 51.2% | 48.8% | 51.2% | 55.0% | — | Benched |

Two independent Transformer models trained per market domain — one for crypto and stocks (CryptoCompare + Polygon.io), one for forex pairs (Twelve Data). Both share the same backbone: 3× TransformerEncoderLayer, pre-norm, 4 heads, 24-bar sequence window, softmax over UP/DOWN probabilities.

The system runs autonomously via GitHub Actions every hour: fetch → indicators → scale → infer → threshold → Kelly-size → Discord post → resolve last hour's outcomes → commit logs. The forex model adds temperature scaling and per-pair confidence thresholds calibrated on a strictly chronological validation set.

Design Decision — Silence is a Signal

OMEGA only acts when max(P(UP), P(DOWN)) ≥ threshold. Everything else is HOLD. Most hours it says nothing — that's intentional. EUR/USD and GBP/USD are benched entirely until session-aware features (London/NY overlap) are proven to add edge. Discipline over activity.

Hi-Conf Accuracy — USD/JPY

68.0%

50 signals on chronological test set

Hi-Conf Accuracy — USD/CHF

61.4%

44 signals · threshold 0.54

Position Sizing

Half-Kelly

Capped at 20% of allocated capital

Inference Cadence

Hourly

GitHub Actions · auto-commits outcome logs